The Architecture of Human Error: An Exhaustive Analysis of Cognitive Biases, Psychological Mechanisms, and Strategic Mitigation

1. Introduction: The Cognitive Miser and the Evolutionary Roots of Irrationality

The human intellect is often celebrated as the pinnacle of biological evolution, a sophisticated engine capable of abstract reasoning, complex linguistic construction, and future-oriented planning. However, beneath this veneer of rationality lies a cognitive architecture that is fundamentally constrained, prone to systematic error, and heavily reliant on mental shortcuts. Contemporary psychology, neuroscience, and behavioral economics have converged on a model of the human mind not as a perfectly rational calculator, but as a “cognitive miser”—a system designed to conserve mental energy by relying on heuristics, or rules of thumb, to navigate the overwhelming complexity of the environment.1

Cognitive biases are not random errors or momentary lapses in concentration. They are, as defined by the International Organization for Standardization and leading psychological literature, systematic patterns of deviation from norm or rationality in judgment.3 These deviations occur when individuals, driven by epistemic goals, non-consciously deviate from objective reality by relying on irrelevant or partially relevant information while ignoring that which is truly diagnostic.3 The implications of these systematic errors are profound, extending from the micro-level of interpersonal conflict and individual financial mismanagement to the macro-level of strategic corporate failure, medical diagnostic error, and geopolitical instability.

The origins of these biases are deeply rooted in the evolutionary history of the species. The brain, which consumes a disproportionate amount of the body’s energy resources, evolved to prioritize speed and survival over statistical precision. In the ancestral environment, the cost of a false positive (assuming a rustle in the grass is a predator when it is not) was negligible compared to the cost of a false negative (assuming the rustle is the wind when it is a predator). Consequently, the human mind developed a “smoke detector principle,” biased toward over-detecting patterns and agency where none exist. While adaptive in the Pleistocene era, these same mechanisms manifest today as distinct cognitive liabilities: the tendency to see non-existent correlations, the inability to intuitively grasp probability, and the rigid adherence to initial beliefs despite contradictory evidence.1

This report provides an exhaustive analysis of the cognitive terrain of bias. It dissects the psychological and neurological mechanisms that drive these distortions, categorizes the major families of bias that afflict human reasoning, and examines their specific, high-stakes consequences in the domains of medicine, business strategy, and finance. Furthermore, it moves beyond mere identification to evaluate the efficacy of mitigation strategies, distinguishing between ineffective awareness campaigns and structurally robust “cognitive forcing functions” that can reshape the architecture of choice.

2. The Mechanics of Distortion: Dual-Process Theory and the Etiology of Error

To understand how biases function, one must first understand the operating system of the human mind. The most robust framework for this is the Dual-Process Theory, popularized by Nobel laureates Daniel Kahneman and Amos Tversky, which posits that human cognition is governed by two distinct systems.5

2.1 System 1 and System 2: The interplay of Intuition and Deliberation

Biases predominantly emerge from the interplay—and often the conflict—between these two modes of thinking.

- System 1 (Implicit/Automatic): This system is fast, automatic, emotional, and subconscious. It handles the vast majority of daily decisions, from reading a facial expression to driving a car on an empty road. It relies on heuristics—mental shortcuts that allow for rapid processing of information without heavy cognitive load. System 1 is the source of “gut feelings” and intuitive judgments.5

- System 2 (Explicit/Deliberative): This system is slow, effortful, logical, and calculating. It is recruited for complex tasks such as solving a difficult math problem or parking in a tight space. System 2 is responsible for monitoring and correcting the suggestions of System 1, but it is often “lazy,” endorsing the intuitive answers of System 1 without sufficient scrutiny.5

Most cognitive biases occur when System 1 generates an intuitive but erroneous answer (often through a process called “attribute substitution,” where a difficult question is replaced by an easier one), and System 2 fails to detect or correct the error.2 For example, when asked to estimate the probability of a complex event, System 1 substitutes the easier task of assessing how easily examples of that event come to mind (the Availability Heuristic).

2.2 The Causes of Bias: Cold Cognition vs. Hot Cognition

The literature distinguishes between “cold” cognitive biases, which arise from information-processing limitations, and “hot” motivational biases, which are driven by emotional needs.4

2.2.1 Information Processing Constraints (Cold Bias)

The human mind has a bounded capacity for information processing. We cannot attend to every variable in our environment. To cope, the brain utilizes heuristics to filter data.

- Limited Capacity: Working memory can hold only a few items at once. Consequently, we ignore base rates (general statistical information) in favor of specific, individuating information that is easier to process.1

- Noisy Retrieval: Memory is not a faithful playback mechanism but a reconstructive process. The retrieval of information is influenced by the current context, mood, and the phrasing of questions, leading to distortions such as the Framing Effect.4

2.2.2 Motivational Factors (Hot Bias)

Motivational biases arise from the desire to maintain a positive self-concept, reduce anxiety, or maintain social status.

- Cognitive Dissonance: When individuals hold two conflicting beliefs, or when behavior conflicts with beliefs, they experience psychological discomfort. To alleviate this, the mind unconsciously distorts information to restore consistency. This drives Confirmation Bias, where contradictory evidence is dismissed to protect the ego.8

- Emotional State: The Affect Heuristic describes how current mood influences decision-making. A positive mood can increase risk tolerance and optimism, while a negative mood can trigger risk aversion and pessimistic forecasting.1

2.3 Neurological Underpinnings

Recent research into the “Default Mode Network” (DMN) of the brain suggests that specific neural pathways are activated during introspective and social processing, which may overlap with the pathways used for heuristic judgments.6 Furthermore, the brain’s reliance on pattern matching means that once a neural pathway is established (a belief or habit), it becomes the path of least resistance. Overriding this requires significant metabolic energy in the prefrontal cortex, which is why humans are biologically predisposed to stick to established beliefs (Status Quo Bias) rather than expend the energy required to update their mental models.10

3. The Great Distorters: A Taxonomy of Key Biases

While hundreds of biases have been identified, they can be categorized into major families based on the functional error they represent.

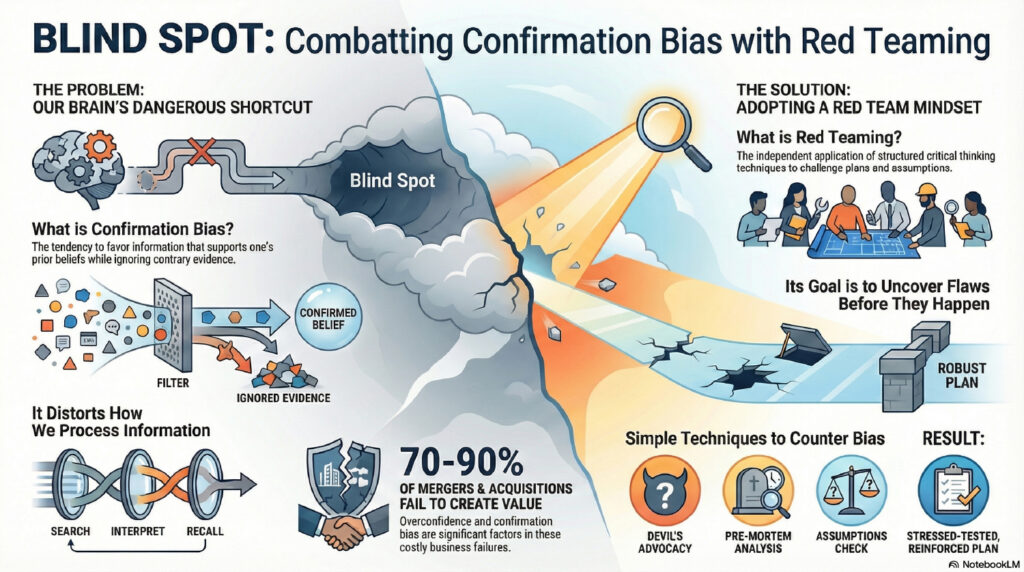

3.1 Confirmation Bias: The Mother of All Misconceptions

Confirmation bias is the tendency to search for, interpret, favor, and recall information in a way that confirms one’s preexisting beliefs or hypotheses.8 It is arguably the most pervasive and damaging bias because it creates a feedback loop that insulates the individual from reality.

3.1.1 The Tripartite Mechanism

Research indicates that confirmation bias operates through three distinct sub-processes 8:

- Selective Exposure: Individuals actively avoid information sources that might contradict their views. In the digital age, this manifests as algorithmic echo chambers where users are only exposed to content that reinforces their ideology.12

- Selective Perception: When exposed to ambiguous information, the biased mind interprets it as supporting the existing hypothesis. Two people with opposing political views can watch the same debate and both be convinced their candidate won.8

- Selective Retention: Information that aligns with prior beliefs is encoded more deeply in memory and recalled more easily. Disconfirming evidence is often forgotten or remembered as “flawed”.8

3.1.2 The Backfire Effect

A particularly robust manifestation of confirmation bias is the “Backfire Effect” (or Belief Perseverance), where presenting an individual with facts that disprove their misconceptions actually strengthens their belief in the misinformation.13 This occurs because the act of counter-arguing against the new information forces the individual to generate new justifications for their old belief, entrenching it further.

3.2 The Availability Heuristic: The Bias of Salience

The availability heuristic leads individuals to estimate the frequency or probability of an event by the ease with which instances or associations can be brought to mind.14

3.2.1 Ease of Recall vs. Content

Crucially, this bias is driven by the ease of cognitive retrieval, not the content of the memory itself.16 Events that are vivid, recent, or emotionally charged are more “available” to memory than dull, abstract, or distant events.4

- Media Influence: Heavy media coverage of rare events (e.g., plane crashes, shark attacks) makes them highly available, leading the public to vastly overestimate their probability while underestimating common killers like heart disease or car accidents.16

- Recency Bias: In performance reviews or financial markets, recent events are given disproportionate weight. A stock market crash yesterday makes the risk of a crash seem essentially 100% today, even if the fundamental probability has not changed.18

3.3 Anchoring and Adjustment: The Trap of the First Impression

Anchoring describes the human tendency to rely too heavily on the first piece of information offered (the “anchor”) when making decisions.11 Subsequent judgments are made by adjusting away from the anchor, but these adjustments are typically insufficient.21

3.3.1 Mechanisms of Anchoring

Two main theories explain this phenomenon:

- Anchor-and-Adjust: Individuals start at the anchor and adjust until they reach a value that seems plausible. Because they stop at the edge of the plausible range, the final estimate remains biased toward the anchor.22

- Selective Accessibility: The anchor primes specific information in memory. If a car is priced at $50,000 (high anchor), the brain retrieves attributes of the car that justify a high price (luxury features), making a subsequent price of $40,000 seem like a bargain, even if the car is only worth $30,000.22

3.4 The Dunning-Kruger Effect: The Dual Burden of Incompetence

The Dunning-Kruger effect is a metacognitive bias where individuals with low ability in a domain overestimate their competence.4

3.4.1 The Metacognitive Deficit

This is not merely a matter of ego or optimism. Dunning and Kruger argue that incompetent individuals suffer a “dual burden”:

- They lack the skill to perform the task correctly.

- They lack the metacognitive skill to recognize that they are performing incorrectly.24

Studies show that participants scoring in the 12th percentile on logic and grammar tests often estimate their performance to be in the 62nd percentile.25 Paradoxically, as individuals gain true expertise, their confidence often drops initially (as they realize the complexity of the field) before rising again, while experts often underestimate their own relative ability because they assume tasks easy for them are easy for everyone.4

4. The Cost of Bias in High-Stakes Domains

The abstract mechanisms of bias translate into concrete, often catastrophic, outcomes when applied to professional decision-making.

4.1 Medical Diagnostics: The Fatal Flaw

In medicine, cognitive biases are a leading cause of diagnostic error, which affects millions of patients annually. Research analyzing malpractice claims indicates that over 50% of diagnostic errors involve cognitive biases.26

4.1.1 Key Medical Biases

| Bias | Definition in Medical Context | Clinical Consequence |

| Premature Closure | Deciding on a diagnosis before gathering all relevant data.27 | Stopping the workup too early; missing the actual, often more serious, pathology. |

| Anchoring | Fixating on the initial symptom or the first clinician’s impression.27 | Failing to adjust the diagnosis despite new, contradictory lab results or imaging. |

| Diagnostic Momentum | Once a label is attached to a patient (e.g., “drug seeker”), it is accepted by subsequent providers.27 | The label becomes sticky, preventing independent re-evaluation by new teams. |

| Availability Bias | Judging a diagnosis as likely because of a recent, memorable case.28 | Over-diagnosing a rare condition because the doctor saw a case of it last week (recency). |

Residents and experienced physicians alike are susceptible. In one study, 88% of residents identified anchoring bias in their own diagnostic errors.28 The pressure of time and the complexity of cases (contextual factors) exacerbate these cognitive failures.28

4.2 Business Strategy and Project Management

In the corporate world, biases decimate shareholder value through failed mergers, disastrous product launches, and operational inefficiencies.

4.2.1 The Planning Fallacy and Optimism Bias

Executives routinely underestimate the time, costs, and risks of future projects while overestimating the benefits. This is the Planning Fallacy.29 It stems from taking an “inside view”—focusing on the specific details of the project—rather than an “outside view” regarding the statistical distribution of outcomes for similar projects.30

- Example: A team launching a new parking app might ignore the fact that 60% of similar municipal tech projects fail due to integration issues, believing their specific plan is unique and immune to these base rates.31

4.2.2 Sunk Cost Fallacy and Status Quo

The Sunk Cost Fallacy drives leaders to “throw good money after bad,” continuing to invest in failing initiatives because of the resources already spent.7 This is often compounded by the Status Quo Bias, a preference for the current state of affairs to avoid the regret associated with active change. Organizations resist innovation because the potential loss of the status quo looms larger than the potential gain of the new strategy.10

4.3 Behavioral Finance: The Psychology of Money

Traditional finance assumes rational actors; behavioral finance recognizes that investors are driven by fear, greed, and bias.

4.3.1 Loss Aversion and the Disposition Effect

Loss Aversion is the observation that the psychological pain of losing $1,000 is about twice as intense as the pleasure of gaining $1,000.32

- Manifestation: This leads to the Disposition Effect, where investors hold onto losing stocks for too long (hoping to break even and avoid realizing the loss) and sell winning stocks too soon (to lock in the gain and eliminate the risk of it disappearing).34

- Myopic Loss Aversion: Investors who check their portfolios frequently are more likely to see short-term losses (which are common). The pain of these losses leads them to invest too conservatively, missing out on long-term equity premiums.32

4.3.2 Herding and Overconfidence

Herding occurs when investors mimic the actions of the crowd, assuming the collective possesses superior information. This drives asset bubbles and subsequent crashes.19 Overconfidence Bias leads investors to trade too frequently, believing they can time the market, which statistically leads to lower returns due to transaction costs and poor timing.19

5. Interpersonal Dynamics: Attribution and Conflict

Biases also distort our social reality, governing how we perceive the actions and intentions of others.

5.1 The Fundamental Attribution Error (FAE)

The FAE is the tendency to overemphasize dispositional (personality-based) explanations for others’ behavior while underemphasizing situational explanations.36

- Self-Serving Bias: When we fail, it is due to external circumstances (bad luck, traffic, difficult questions). When we succeed, it is due to internal traits (talent, hard work).38

- The Error: When others fail, we attribute it to their character (laziness, incompetence).

- Impact: In relationships, this leads to toxic conflict cycles. If a partner forgets a chore, the FAE interprets it as “they don’t care about me” (character) rather than “they were stressed at work” (situation).37

5.2 The Halo and Horn Effects

The Halo Effect occurs when a positive impression in one area (e.g., physical attractiveness, confidence) influences opinion in an unrelated area (e.g., intelligence, honesty).18 Conversely, the Horn Effect allows a single negative trait to tarnish the entire perception of an individual. In hiring, this can lead to the rejection of qualified candidates based on superficial characteristics, while in relationships, it can lead to the idealization of a partner, blinding the individual to red flags.38

6. Quantitative Measurement of Rationality

Can susceptibility to bias be measured? While traditional IQ tests measure algorithmic cognitive capacity, they do not measure “dysrationalia”—the inability to think rationally despite adequate intelligence.

6.1 The Cognitive Reflection Test (CRT)

The CRT, developed by Shane Frederick, is a performance-based measure of the tendency to override an incorrect System 1 “gut” response and engage in System 2 reflection.5 It is considered a potent predictor of rational thinking.

The Three Classic CRT Items 40:

- A bat and a ball cost $1.10 in total. The bat costs $1.00 more than the ball. How much does the ball cost?

- Intuitive Answer: 10 cents.

- Correct Answer: 5 cents.

- If it takes 5 machines 5 minutes to make 5 widgets, how long would it take 100 machines to make 100 widgets?

- Intuitive Answer: 100 minutes.

- Correct Answer: 5 minutes.

- In a lake, there is a patch of lily pads. Every day, the patch doubles in size. If it takes 48 days for the patch to cover the entire lake, how long would it take for the patch to cover half of the lake?

- Intuitive Answer: 24 days.

- Correct Answer: 47 days.

Research shows that even students at elite universities often fail these questions. Scoring low on the CRT correlates with high susceptibility to the anchoring bias, the gambler’s fallacy, and overconfidence.5 It measures “cognitive decoupling”—the ability to separate the intuitive signal from the logical requirement.42

6.2 The Rationality Quotient (RQ)

Keith Stanovich and colleagues have proposed the Comprehensive Assessment of Rational Thinking (CART) to measure the “Rationality Quotient” (RQ).43 Unlike IQ, which measures processing speed and memory, RQ measures:

- Probabilistic and Statistical Reasoning: Understanding base rates and probability.

- Scientific Reasoning: Testing hypotheses and falsification.

- Avoidance of Miserly Processing: Using the CRT and other measures.

- Contaminated Mindware: The presence of anti-scientific or superstitious beliefs that hinder rational thought.43

6.3 The Implicit Association Test (IAT)

The IAT is widely used to measure “implicit bias”—unconscious associations between groups (e.g., racial minorities) and attributes (e.g., “bad” or “dangerous”).45 However, it is the subject of intense academic controversy.

- Critique: Meta-analyses have shown that the IAT has low test-retest reliability (your score can change dramatically if you take it again a week later) and a weak correlation with actual discriminatory behavior.46

- Interpretation: Critics argue it may measure familiarity with cultural stereotypes rather than personal endorsement of them. While useful for population-level research, its validity for individual diagnostics is questionable.47

7. The Science of Debiasing: Mitigation Strategies

Given that biases are largely unconscious and automatic, “trying harder” to be objective or merely being aware of biases is often ineffective.48 Effective mitigation requires structural interventions known as “Cognitive Forcing Functions” (CFFs) and environmental redesign.

7.1 Cognitive Forcing Functions (CFFs)

CFFs are constraints applied at the moment of decision-making to disrupt heuristic reasoning and force the engagement of System 2 analytical thinking.49

7.1.1 Clinical and Operational CFFs

- Diagnostic Time-outs: A mandated pause in a clinical setting to ask, “What data does not fit my current hypothesis?” This directly counters confirmation bias and premature closure.49

- Rule Out Worst-Case Scenario (ROWS): A forcing function requiring a physician to explicitly disprove the most dangerous potential diagnosis before accepting a benign one.

- Checklists: As championed in aviation and surgery, checklists offload the cognitive burden of memory, preventing errors of omission caused by fatigue or overconfidence.50

7.2 The Pre-Mortem Technique

The Pre-mortem, developed by Gary Klein, is a specific strategy to counter the Planning Fallacy and Groupthink in strategic projects.51 Unlike a risk assessment (which asks “what might go wrong?”), the Pre-mortem demands a shift in temporal perspective.

Step-by-Step Implementation:

- Preparation: Bring the key stakeholders together after the plan is formed but before execution begins.

- The Hypothetical: The leader announces: “Imagine we are two years in the future. The project has failed spectacularly. It is a total disaster.”.53

- Generation: Individuals independently write down every reason why it failed. This unleashes “prospective hindsight,” which research shows generates 30% more potential problems than standard brainstorming.31

- Consolidation: The team shares the reasons, prioritizing the most likely and destructive.

- Mitigation: The plan is revised to specifically address these identified failure modes.51

7.3 Reference Class Forecasting (RCF)

To neutralize Optimism Bias in project estimation, RCF forces the “Outside View”.54

Implementation Guide:

- Identify the Reference Class: Select a set of past projects that are broadly similar to the current one (e.g., “all IT migrations in the last 5 years”).

- Establish the Distribution: Determine the empirical outcome distribution for this class (e.g., “average cost overrun was 40%, average delay was 6 months”).

- Compare: Place the current project on this distribution curve. If the current plan assumes zero delay, but 90% of the reference class had delays, the plan must be adjusted to the mean of the reference class.54

7.4 Bias Habit-Breaking Training

While general diversity training is often ineffective, “Bias Habit-Breaking” interventions have shown promise.56 These treat bias as a bad habit that can be broken through specific cognitive replacements:

- Stereotype Replacement: Recognizing a stereotypical response and consciously replacing it with a non-stereotypical thought.

- Individuation: Consciously gathering specific information about a person to prevent group-based inferences.

- Perspective Taking: Deliberately imagining the world from the other person’s viewpoint, which reduces the Fundamental Attribution Error.36

8. Conclusion: Toward Epistemic Humility

The architecture of the human mind is a double-edged sword. The same heuristics that allow us to navigate a complex world with speed and efficiency also render us vulnerable to systematic errors in judgment. From the “Double Burden” of the Dunning-Kruger effect to the “Inside View” of the Planning Fallacy, these biases shape the trajectory of our lives, our organizations, and our societies.

The research presented here leads to a crucial conclusion: rationality is not a default setting, but a discipline. It requires “epistemic humility”—the recognition that our intuitive certainty is often an illusion. “Trying harder” to be objective is insufficient. We must instead build environments and processes—Cognitive Forcing Functions, Pre-mortems, and check-lists—that protect us from our own cognitive architecture.

By understanding the mechanisms of the “cognitive miser,” recognizing the specific manifestations of bias in our fields, and implementing structural defenses, we can transition from the intuitive errors of System 1 to the clearer, more accurate reasoning of System 2. The goal is not to eliminate bias entirely—an impossible task for a human mind—but to manage it, reducing the “architecture of error” to a manageable risk rather than a fatal flaw.

9. Appendix: Summary of Key Biases and Mitigation Strategies

| Bias Category | Specific Bias | Definition | Impact | Mitigation Strategy |

| Information Processing | Confirmation Bias | Favoring information that confirms existing beliefs.11 | Polarization, poor strategy, medical error. | “Consider the Opposite,” Devil’s Advocate, Blind Analysis. |

| Anchoring | Relying heavily on the first piece of information.20 | Skewed negotiations, pricing errors. | Remove the anchor, re-anchor with counter-data, use checklists. | |

| Availability | Judging probability by ease of recall.14 | Fear of rare events, ignoring common risks. | Consult base rates/statistics, ignore “vivid” anecdotes. | |

| Self-Perception | Dunning-Kruger | Overestimating competence due to lack of skill.24 | Incompetent leadership, resistance to training. | Metacognitive training, objective peer feedback. |

| Fundamental Attribution Error | Over-weighting disposition over situation for others.36 | Relationship conflict, toxic culture. | Perspective-taking, “Assume positive intent.” | |

| Strategic/Financial | Sunk Cost Fallacy | Continuing failed endeavors due to past investment.18 | Wasted resources, escalating commitment. | Evaluate based on future utility only; rotate decision makers. |

| Planning Fallacy | Underestimating time/cost of future tasks.29 | Project delays, budget overruns. | Reference Class Forecasting (The “Outside View”). | |

| Loss Aversion | Fearing loss more than valuing equivalent gain.32 | Risk aversion, panic selling. | Reframing, focusing on long-term portfolio goals. |

Works cited

- What Is Cognitive Bias? | Definition, Types & Examples – Scribbr, accessed January 5, 2026, https://www.scribbr.com/research-bias/cognitive-bias/

- Mechanisms: Cognitive Biases and Heuristics – Understanding and Overcoming Hate, accessed January 5, 2026, https://www.overcominghateportal.org/mechanisms-cognitive-biases-and-heuristics.html

- Cognitive bias – Wikipedia, accessed January 5, 2026, https://en.wikipedia.org/wiki/Cognitive_bias

- List of cognitive biases – Wikipedia, accessed January 5, 2026, https://en.wikipedia.org/wiki/List_of_cognitive_biases

- Cognitive Reflection Test – Examples of Reacting vs Checking, accessed January 5, 2026, https://mannhowie.com/cognitive-reflection-test

- Rethinking clinical decision-making to improve clinical reasoning – Frontiers, accessed January 5, 2026, https://www.frontiersin.org/journals/medicine/articles/10.3389/fmed.2022.900543/full

- THE IMPACT OF COGNITIVE BIASES ON DECISION-MAKING IN EVERYDAY LIFE – IJRAR.org, accessed January 5, 2026, https://www.ijrar.org/papers/IJRAR19D5637.pdf

- Confirmation Bias – The Decision Lab, accessed January 5, 2026, https://thedecisionlab.com/biases/confirmation-bias

- Exploring Selective Exposure and Confirmation Bias as Processes Underlying Employee Work Happiness: An Intervention Study – Frontiers, accessed January 5, 2026, https://www.frontiersin.org/journals/psychology/articles/10.3389/fpsyg.2016.00878/full

- Identify Cognitive Biases in Business Decision-Making – Mailchimp, accessed January 5, 2026, https://mailchimp.com/resources/what-is-cognitive-bias/

- 12 Types of Cognitive Bias That Influence Your Thinking – Verywell Mind, accessed January 5, 2026, https://www.verywellmind.com/cognitive-biases-distort-thinking-2794763

- Selective exposure theory – Wikipedia, accessed January 5, 2026, https://en.wikipedia.org/wiki/Selective_exposure_theory

- List of Cognitive Biases and Heuristics – The Decision Lab, accessed January 5, 2026, https://thedecisionlab.com/biases

- accessed January 5, 2026, https://thedecisionlab.com/biases/availability-heuristic#:~:text=Originally%2C%20they%20described%20this%20bias,recalled%20memories%20as%20a%20reference.

- Availability heuristic – Wikipedia, accessed January 5, 2026, https://en.wikipedia.org/wiki/Availability_heuristic

- How do People Judge Risk? Availability may Upstage Affect in the Construction of Risk Judgments – NIH, accessed January 5, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC9292208/

- 3 Things Everyone Should Know About the Availability Heuristic – Farnam Street, accessed January 5, 2026, https://fs.blog/mental-model-availability-bias/

- 10 Cognitive Biases That Can Derail Your Business Strategy – StratNavApp, accessed January 5, 2026, https://www.stratnavapp.com/Articles/cognitive-biases

- Decoding Cognitive Biases: What every Investor needs to be aware of, accessed January 5, 2026, https://magellaninvestmentpartners.com/insights/decoding-cognitive-biases-what-every-investor-needs-to-be-aware-of/

- Anchoring effect – Wikipedia, accessed January 5, 2026, https://en.wikipedia.org/wiki/Anchoring_effect

- Individual Differences in Anchoring Effect: Evidence for the Role of Insufficient Adjustment, accessed January 5, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC6396698/

- Anchoring Bias – The Decision Lab, accessed January 5, 2026, https://thedecisionlab.com/biases/anchoring-bias

- What’s the relationship between priming and anchoring? – Psychology Stack Exchange, accessed January 5, 2026, https://psychology.stackexchange.com/questions/4804/whats-the-relationship-between-priming-and-anchoring

- The Dunning-Kruger Effect: An Overestimation of Capability – Verywell Mind, accessed January 5, 2026, https://www.verywellmind.com/an-overview-of-the-dunning-kruger-effect-4160740

- Unskilled and unaware of it: how difficulties in recognizing one’s own incompetence lead to inflated self-assessments – PubMed, accessed January 5, 2026, https://pubmed.ncbi.nlm.nih.gov/10626367/

- The Impact of Cognitive Bias on Diagnostic Error – Coverys, accessed January 5, 2026, https://www.coverys.com/expert-insights/the-impact-of-cognitive-bias-on-diagnostic-error

- An elderly woman with ‘heart failure’: Cognitive biases and diagnostic error – Cleveland Clinic Journal of Medicine, accessed January 5, 2026, https://www.ccjm.org/content/ccjom/82/11/745.full.pdf

- Seen Through Their Eyes: Residents’ Reflections on the Cognitive and Contextual Components of Diagnostic Errors in Medicine – NIH, accessed January 5, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC3703642/

- Cognitive Biases that Affect Strategic Planning: The Top 10 | LSA Global, accessed January 5, 2026, https://lsaglobal.com/blog/top-10-cognitive-biases-that-affect-strategic-planning/

- Biases in decision-making: A guide for CFOs | McKinsey, accessed January 5, 2026, https://www.mckinsey.com/capabilities/strategy-and-corporate-finance/our-insights/biases-in-decision-making-a-guide-for-cfos

- Bias Busters: Premortems: Being smart at the start – McKinsey, accessed January 5, 2026, https://www.mckinsey.com/capabilities/strategy-and-corporate-finance/our-insights/bias-busters-premortems-being-smart-at-the-start

- Loss Aversion – The Decision Lab, accessed January 5, 2026, https://thedecisionlab.com/biases/loss-aversion

- Loss Aversion Bias Result – Charles Schwab, accessed January 5, 2026, https://www.schwab.com/investing-bias/loss-aversion

- Loss Aversion | Definition + Investing Bias Example – Wall Street Prep, accessed January 5, 2026, https://www.wallstreetprep.com/knowledge/loss-aversion/

- 5 Behavioral Biases That Can Impact Your Investing Decisions, accessed January 5, 2026, https://online.mason.wm.edu/blog/behavioral-biases-that-can-impact-investing-decisions

- Attribution Theory—And Why Your Relationships Hinge on It | Psychology Today, accessed January 5, 2026, https://www.psychologytoday.com/us/blog/beyond-school-walls/202403/attribution-theory-and-why-your-relationships-hinge-on-it

- Los Angeles Marriage & Family Therapists-Fundamental Attribution Error – Root to Rise Therapy, accessed January 5, 2026, https://rootrisetherapyla.com/blog/2024/fundamental-attribution-error

- How Cognitive Biases Impact Our Relationships – Psychology Today, accessed January 5, 2026, https://www.psychologytoday.com/us/blog/here-there-and-everywhere/202309/how-cognitive-biases-impact-our-relationships

- Cognitive Reflection and Decision Making – Yale Law School, accessed January 5, 2026, https://law.yale.edu/sites/default/files/area/workshop/leo/document/Frederick_CognitiveReflectionandDecisionMaking.pdf

- Cognitive reflection test – Wikipedia, accessed January 5, 2026, https://en.wikipedia.org/wiki/Cognitive_reflection_test

- Cognitive Reflection Test | Embrace Autism, accessed January 5, 2026, https://embrace-autism.com/cognitive-reflection-test/

- Actively Open-Minded Thinking and Its Measurement – MDPI, accessed January 5, 2026, https://www.mdpi.com/2079-3200/11/2/27

- The Rationality Quotient: Toward a Test of Rational Thinking | Books Gateway | MIT Press, accessed January 5, 2026, https://direct.mit.edu/books/monograph/4086/The-Rationality-QuotientToward-a-Test-of-Rational

- The Development of a Test of Rational Thinking – John Templeton Foundation, accessed January 5, 2026, https://www.templeton.org/grant/the-development-of-a-test-of-rational-thinking

- The implicit association test: Shining a light on hidden beliefs, accessed January 5, 2026, https://www.apa.org/research-practice/conduct-research/hidden-association

- The Implicit Association Test | American Academy of Arts and Sciences, accessed January 5, 2026, https://www.amacad.org/publication/daedalus/implicit-association-test

- Invalid Claims About the Validity of Implicit Association Tests by Prisoners of the Implicit Social-Cognition Paradigm – PMC – NIH, accessed January 5, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC8167921/

- Interventions to Reduce Implicit Bias in High-Stakes Professional Judgements: A Systematic Review – PMC, accessed January 5, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC12649508/

- To Trust or to Think: Cognitive Forcing Functions Can Reduce Overreliance on AI in AI-assisted Decision-making – arXiv, accessed January 5, 2026, https://arxiv.org/pdf/2102.09692

- Cognitive debiasing 2: impediments to and strategies for change – PMC – NIH, accessed January 5, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC3786644/

- Conducting a Premortem | Alliance for Decision Education, accessed January 5, 2026, https://alliancefordecisioneducation.org/resources/conducting-a-pre-mortem/

- 6 Qs to Conducting a Premortem: Who, What, When, Where, Why, and How – Strategic Decision Solutions, accessed January 5, 2026, https://strategicdecisionsolutions.com/premortem-method/

- Decision-Making Guide: Pre-Mortem & Regret Score Method – Brainzyme, accessed January 5, 2026, https://www.brainzyme.com/blogs/work-life-tips/how-to-make-better-decisions-pre-mortem-regret-score-method

- Reference Class Forecasting Guide | PDF | Scientific Method | Methodology – Scribd, accessed January 5, 2026, https://www.scribd.com/document/239151208/Reference-Class-Forecasting

- From Nobel Prize to project management – PMI, accessed January 5, 2026, https://www.pmi.org/learning/library/nobel-project-management-reference-class-forecasting-8068

- Developing scientifically validated bias and diversity trainings that work: empowering agents of change to reduce bias, create inclusion, and promote equity – PMC, accessed January 5, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC10120861/

- Fundamental Attribution Error: Shifting the Blame Game – Positive Psychology, accessed January 5, 2026, https://positivepsychology.com/fundamental-attribution-error/