The Secret Language of Faces: An Introduction to Reading Emotions

Have you ever found yourself in a conversation where someone’s words conveyed one message, but their face told a completely different story? That subtle tightening of the lips or a fleeting flash of worry in their eyes can speak volumes, revealing a deeper layer of communication beneath speech. This powerful, non-verbal dialogue is the language of facial expressions, a universal system for revealing our inner emotional states.

This comprehensive guide is designed for beginners eager to understand this secret language of emotions. We will explore the fascinating science behind our most common expressions, from the universally recognized ‘basic emotions’ to the tiny, split-second flashes known as microexpressions that can betray hidden feelings. Finally, we’ll examine how this science is being applied in real-world scenarios—from clinical diagnosis to advanced artificial intelligence—and how AI is pushing the boundaries of what it means to ‘read’ a face.

1. The Universal Language: Are Some Expressions the Same Everywhere?

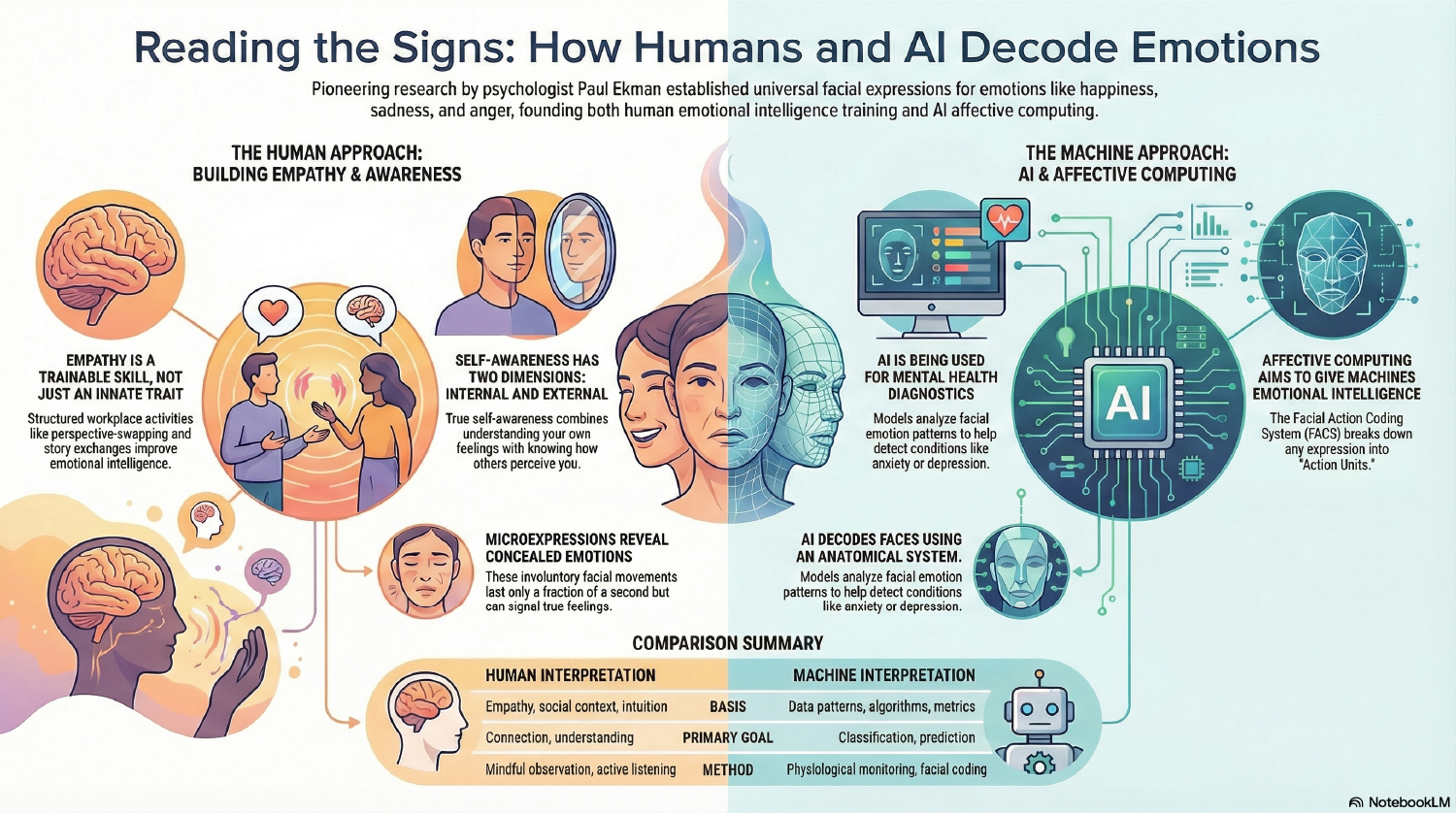

At the core of understanding facial expressions is the concept of “basic emotions”—a set of feelings believed to be universally expressed and recognized across all human cultures. This groundbreaking idea was championed by psychologist Paul Ekman, whose extensive research demonstrated that a few core emotional expressions are a shared part of the human experience, regardless of cultural background.

Ekman’s foundational work identified six primary emotions that exhibit distinct and reliable facial signals. These expressions are considered so fundamental that they are thought to be ingrained in our biology. In fact, Charles Darwin first observed strong similarities in how people from diverse cultures express emotion, concluding that these expressions were likely universal. While these six emotions form the bedrock of universality theory, scientific debate continues, and Ekman himself later expanded this list to include more complex feelings like contempt, shame, and relief.

The table below outlines these six basic emotions and the key facial movements associated with each, providing a crucial foundation for learning to read faces.

| Emotion | Key Facial Indicators |

|---|---|

| Happiness | Upturned corners of the mouth, crow’s feet around the eyes. |

| Sadness | Downturned mouth corners, drooping eyelids. |

| Anger | Tightened lips, furrowing of the brows, glaring eyes. |

| Fear | Wide eyes, raised eyebrows, and an open mouth. |

| Surprise | Raised eyebrows, opened eyes, and a dropped jaw. |

| Disgust | Wrinkled nose, raised upper lip. |

These facial movements are so precisely defined that researchers catalogue them using the Facial Action Coding System (FACS). For example, a genuine smile is a specific combination of Action Unit 6 (which creates crow’s feet) and Action Unit 12 (which pulls the lip corners up).

While these basic emotions are often easy to spot, our faces can also reveal feelings we’re trying to hide in tiny, split-second flashes.

2. The Telltale Flash: Uncovering Microexpressions

A microexpression is a rapid, involuntary facial movement that reveals an underlying emotional state. These fleeting expressions are a form of emotional leakage, where concealed emotions involuntarily manifest on the face. This phenomenon occurs because our voluntary and involuntary facial expressions share the same neural pathways. When a person consciously attempts to suppress an emotion (a full “macroexpression”), the underlying involuntary system can still fire off a signal, causing a brief, uncontrollable “leak” that offers an unfiltered glimpse into a person’s genuine feelings.

While there isn’t perfect consensus on their exact duration, research estimates microexpressions to be remarkably brief:

- They can last for less than half a second.

- Some flash on and off the face in less than one-quarter of a second.

- They can be as brief as 1/25th to 1/5th of a second.

The key takeaway is that microexpressions differ from regular facial expressions primarily in their duration, not their form. A microexpression of anger, for instance, involves the same muscle movements as a full expression of anger—it just happens in the blink of an eye. Because these fleeting expressions can reveal concealed emotions, many people believe they are a foolproof way to catch a liar, but the science tells a more complicated story.

3. The Myth of the Human Lie Detector

One of the biggest misconceptions about reading faces is that microexpressions are an easy and reliable way to detect lies. While they can indeed reveal a hidden emotion, this emotion is not necessarily proof of deception. Academic research has consistently shown that the behavioral signs of lying are actually quite “faint” and that very few behaviors are reliably related to deceit.

This scientific reality, however, has not prevented the institutionalization of these myths. A prominent example is the U.S. Transportation Security Administration’s (TSA) multi-million dollar SPOT (Screening of Passengers by Observation Techniques) program. This program trained officers to identify potential threats based on a checklist of behaviors and expressions believed to indicate stress and deception. Despite its widespread implementation, the program was heavily criticized by the Government Accountability Office (GAO) and academic experts for relying on a scientifically flawed methodology, transforming a theoretical misunderstanding into a compelling case study of failed policy.

Many of the cues that people—including some law enforcement personnel—believe indicate deception are actually just signs of stress or discomfort. These feelings can be experienced by both liars and honest individuals in high-stakes situations. Worse yet, research shows that the more people (including trained professionals) focus on these supposed ‘tells,’ the less accurate their judgments become.

Common Misconceptions: Signs of Stress, Not Deceit

- Gaze aversion (looking away): Often mistaken for dishonesty, this is more commonly a sign of nervousness or cognitive effort.

- Fidgeting: Shifting posture or fidgeting is a common reaction to stress, not necessarily deceit.

- Speech errors: Stuttering or verbal mistakes are more likely linked to anxiety than to lying.

- Posture shifts: Like fidgeting, changing one’s posture is not a reliable indicator of deception.

A crucial finding from decades of research is that focusing solely on nonverbal cues can actually impair lie detection accuracy. It is often more fruitful to listen to the content of a person’s speech than to search for a single, giveaway expression.

While humans struggle to reliably detect deception from faces, researchers are now teaching artificial intelligence to recognize and interpret our emotional cues with remarkable results.

4. Teaching Machines to See Our Feelings: The Rise of Affective Computing

The field of affective computing is a cutting-edge area of artificial intelligence (AI) focused on making machines more emotionally intelligent. Its goal is to teach AI systems to recognize and respond to human emotions. By analyzing different types of data, these systems are learning to “see” and interpret our feelings in ways that often go far beyond human capability, opening new frontiers in understanding emotional intelligence.

AI systems utilize a multimodal approach, drawing on several data sources to understand a person’s emotional state:

- Facial Expressions: Using cameras and advanced computer vision to analyze the subtle movements of facial muscles.

- Emotional Speech: Analyzing vocal features like pitch, speed, volume, and tone to detect emotion in a person’s voice.

- Physiological Signals: Using sensors in devices like smartwatches to measure biological indicators such as heart rate, skin temperature, and galvanic skin response (a measure of subtle changes in sweat gland activity related to emotional arousal).

This evolving technology has several promising real-world applications, particularly in improving health and our interactions with technology.

Healthcare and Mental Wellness

Emotionally aware AI holds enormous potential in healthcare. Wearable devices could track stress patterns over time, providing valuable insights to both users and doctors. This technology could also assist clinicians in diagnosing mental disorders like anxiety and depression by objectively observing a patient’s visual cues during interviews. In fact, one recent study demonstrated a hybrid AI model that achieved 81% accuracy in detecting mental disorders from facial cues alone. Researchers are even exploring its use as a potential biomarker for identifying Autism Spectrum Disorder (ASD), offering new avenues for early detection and support.

Smarter Human-Computer Interaction

Affective computing aims to reduce the “emotional disconnect” between humans and machines. By understanding a user’s emotional state, digital assistants, learning tools, and other interactive systems could become more responsive and intuitive. This could make our daily interactions with technology feel more natural, supportive, and personalized.

Despite its promise, this technology faces significant challenges. If an AI is trained primarily on images of one demographic group, it may disproportionately misinterpret expressions from underrepresented populations. This poses a critical flaw if the technology is used in sensitive contexts like security screening or clinical diagnosis, highlighting the need for diverse and inclusive training data.

As this technology continues to evolve, it underscores just how much rich information is packed into every facial expression we make, revealing the profound complexity of nonverbal communication.

5. Conclusion: A Deeper Connection Through Understanding

Understanding the secret language of faces is a fascinating journey into the heart of human communication and emotional intelligence. We’ve discovered that a set of basic emotional expressions is universally shared, connecting us across diverse cultures. We’ve also learned that while fleeting microexpressions can offer a glimpse into concealed feelings, they are complex cues to emotional states, not simple or foolproof “lie detectors.” Finally, the rapid rise of artificial intelligence is opening new frontiers, allowing machines to interpret these intricate signals in ways that could transform fields like healthcare and human-computer interaction.

The true skill in reading facial expressions lies not in judging others, but in using this profound knowledge to foster empathy, deepen communication, and build a more compassionate understanding of the rich and complex emotional lives of those around us. By tuning into these nonverbal cues, we can cultivate stronger connections and navigate our social world with greater insight and sensitivity.